TOP STORIES

Calabrio Offers a New Solution to Quality Monitor and Analyze Chatbots

Workforce performance solutions provider Calabrio recently announced a new suite of Bot Analytics tools for Quality Management (QM). Bot Analytics …

Read MoreBroadvoice Expands Channel Partner Program in CCaaS Market with Veteran CX Hires

Broadvoice, a provider of omnichannel contact center and unified communication solutions for SMBs and business process outsourcing firms, expanded its …

Read MoreA Look at GenAI and Digital Twins, with Data Presented by Info-Tech

A new industry resource from Info-Tech Research Group lends credence to comprehensive practices powered by generative artificial intelligence (GenAI) …

Read MoreBusinesses Face Security Woes in the Age of AI

Organizations may struggle to keep pace with evolving security landscapes, particularly in the face of AI advancements and the growing …

Read MoreThe CX Hot Six for Telecoms Learning Center

The CX Hot Six for Telecoms

The CX Hot Six for Telecoms

A guide to customer experience technology that delivers on business KPIs

LATEST FROM COMMUNITIES

BreachRx Secures $6.5M Seed Funding

BreachRx closed a $6.5 million seed round, led by SYN Ventures, with additional support from Overline.

Read MoreAssessing IoT Innovator LTIMindtree: Its 2023-24 Successes to Date and a Peek at What's Next

IoT Evolution World has presented a brief rundown of LTIMindtree's successes during FY24, as well as a peek at what's to come…

Read MoreBigleaf Networks and NHC Partner to Optimize the Edge

New Horizon Communications Corp. (NHC) entered a strategic collaboration with Bigleaf Networks to offer network communication…

Read MoreSecure the Everywhere Work Landscape: Ivanti Launches EASM and Platform Upgrades

The recently released Ivanti Neurons for External Attack Surface management, or EASM, helps combat attack surface expansion w…

Read MoreTrellix Teams Up with Google Chrome Enterprise for Protection Against Insider Threats

Cybersecurity firm Trellix, known for its extended detection and response (XDR) solutions, has partnered with Google Chrome E…

Read MoreEmbracing the Future With Sustainable IT Practices

In our fast-paced digital age, the shift towards sustainable IT is becoming increasingly critical. This shift, led by compani…

Read MoreVulnCheck Closes Funding Round at $7.95M to Power Up Next-Generation Vulnerability Management

VulnCheck recently closed its seed funding round at a total of $7.95 million, with $4.75 million in new funding.

Read More4 Key GFI Products Now Powered by AI

GFI announced the integration of its CoPilot AI component into four of its core products.

Read More3Phase Makes the Switch: Ooma AirDial Replaces Legacy POTS for Reliable Elevator Communication

Ooma announced that 3Phase selected Ooma AirDial as the exclusive POTS replacement solution to recommend to its customers.

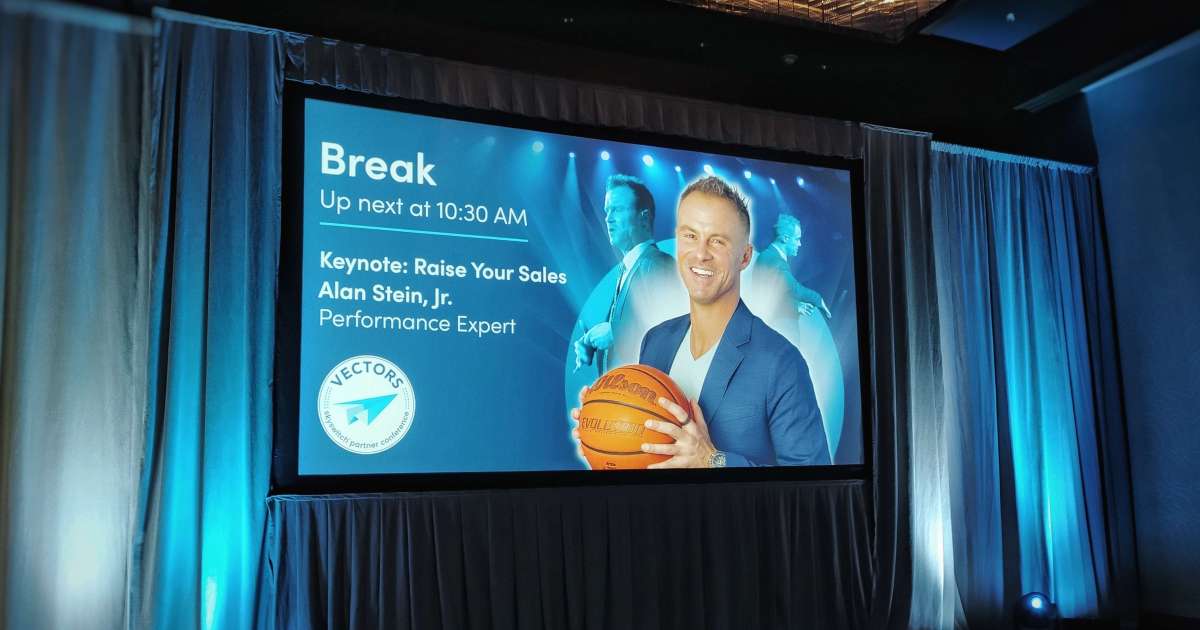

Read MoreA Winner's Mindset: Alan Stein Jr. Helps Businesses Build Winning Teams

At SkySwitch Vectors 2024 in downtown Nashville, Tennessee, last week, the keynote speaker was Alan Stein Jr. He stylishly pr…

Read More